Is NVDA the only way to invest in AI? - Part 2 NVDA Q2 earnings brief

Portfolio: Highlights, trends and comparative performance findings from recent earnings reports

In Part 1 of this series, we covered the basics of AI and how it functions. We then looked more closely at who is actually making money in AI right now and who (other than NVDA) is producing the much coveted GPUs. As we continue our exploration (in next week’s post), we will decipher which are the better AI investing opportunities out there and identify some of the worst to avoid. And we will try to map out the potential AI adoption cycle and calendar to give us a sense of how things could play out over the next 2-3 years.

But first, let’s take a closer look at NVDA’s Q2 business performance…

One cannot be a savvy AI investor without reviewing and understanding NVDA’s business portfolio. This company is at ground zero in the AI space and how they perform, what they tell us, allows us to get a broader state-of-the-state grasp of AI.

NVDA’s CEO, Jensen Huang, has been talking about AI for years. But most investors were not paying attention to the vision he was painting about the value that AI could bring to our lives and to businesses around us. They continued shunning the stock by focusing on the company’s gaming, desktop and crypto mining business. Well, all that changed over the past two quarters.

Irrespective of whether you are an NVDA bull or bear, you have to respect their report.

NVDA told us that most of the existing data centers will have to be re-architected, redesigned and upgraded to truly support generative AI for business usage….to the tune of about $1T in capital spend over the next 3-5 years.

Cloud computing is making a major paradigm shift from general-purpose computing to accelerated computing and generative AI.

In today’s world, programmers write software code to control CPUs to read data to then make business calculations and decisions. This processing happens in a sequential manner, one data field at a time, one software command at a time. In contrast, AI GPUs are able to parallel-process multiple computing commands, using several semiconductor chip cores to efficiently process vast amounts of data at the same time. e.g. A CPU based software program processes an image one pixel at a time while a GPU based AI program processes the entire image all at once.

AI LLMs are trained on test data (called the training phase) and then deployed (called the inference phase) in specialised data centers to read real-time business data and make independent business decisions, with the LLM getting smarter and more self-sufficient over time without the need for additional programming.

NVDA’s H100 GPUs and full stack AI solutions (including their networking solutions, switching technologies and CUDA software) are currently the only products in the market that can provide these capabilities.

Let’s take a closer look at NVDA’s Q2 earnings report to understand why…

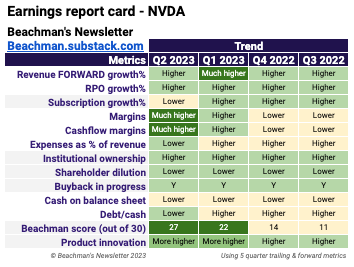

Q2 earnings report card

See detailed financial map at the bottom of this post.

Revenue: Q2 revenue was $13,507M 101% yoy 88% qoq. They beat Q2 consensus estimates by a full 20%.

Gaming revenue was $2,486M 22% yoy 11% qoq reflecting demand for the GeForce RTX 40 Series GPUs based on the Nvidia Ada Lovelace architecture and normalization of channel inventory levels.

Data center revenue jumped to $10,323M 171% yoy 141% qoq led by strong hyperscaler and consumer internet company driven GPU purchases and networking revenue which by themselves increased 94% yoy 85% qoq.

Professional Visualization revenues were $379M -24% yoy 28% qoq reflecting lower sell-in to partners balanced by sequentially stronger enterprise workstation demand and the ramp of Nvidia RTX products based on the Ada Lovelace Architecture.

Automotive revenue logged $253M 15% yoy -15% qoq primarily driven by sales of self-driving platforms and reflecting lower overall auto demand, particularly in China.

Forward estimates: Q3 revenue estimate was revised upwards to $16,320M 175% yoy. Full year 2023 estimate was updated to $54,319M 101% yoy. I am considering all these forward estimates suspect. Imo, they are bound to get revised higher at least in the short term. Analysts estimates are all over the place right now…everyone is guessing.

There is a growing thesis that NVDA has perhaps pulled forward about 2 years worth of data center demand similar to how cloud stocks did so during the COVID pandemic. I am not sure I agree with this POV because it assumes a zero-sum game between current CPU data centers and future AI GPU data centers.

NVDA’s future revenues are constrained not by demand, but by supply from it’s fab partners, TSMC and Samsung. TSMC is on track to double their production capacity in 2024 and 10x it in the next 5 years.

RPO: RPO rose to $717M 11% yoy, marking a nice 5 quarter recovery higher.

Margins: Margins have now ramped up their expansion in Q2. Gross margin jumped to 70% and is expected to rise further to 71-72% in Q3 due to increasing sales in the higher margin data center segment. Operating margin came in at $6,800M 50%. EBITDA margin was also $6,800M 50%. Net margin rose to $6,188M 46%. Expenses as a percentage of total revenues have nicely turned lower in Q2. Even stock based compensation is well in check at only 6% of total revenues.

Cashflows: Cash flow margins expanded for the second quarter in a row. Operating cash flow rose to $6,348M 47%. Free cash flow increased to $6,059M 45%. Free cash flow per diluted share more than doubled to $2.42/share.

Cash and debt: NVDA continues to add cash to their balance sheet for the past four quarters. In Q2, they had $16,023M in cash and lowered their total debt to $10,746M. Their debt to cash ratio dropped lower to 0.67…now within my preferred 0.75 range.

Shareholder value: Shareholder dilution continues to decrease with total diluted shares at 2,499M shares -1% yoy.

Institutional ownership ticked higher to 69%. I expect this number to keep rising going forward.

The company bought back $3.1B in stock (7.5M shares) in Q2 and announced a new $25B share repurchase program with now a total of $29B in the kitty for further buybacks.

Marketshare:

AMD recently estimated the data center market TAM at $150B by 2027. Morningstar estimates that NVDA will secure about $41B of the data center marketshare in 2023, followed by $60B in 2024 and rising to $100B by 2027. As per Mercury Research, they now own an 80% marketshare in the GPU space.

AMD is the closest competitor to NVDA today. Intel has struggled to build a high-end GPU and may have something they could perhaps launch in 2025.

NVDA’s proprietary CUDA software platform (including a variety of libraries, compilers, frameworks, and development tools) allows AI professionals to build their models and only runs on NVDA GPUs. This gives NVDA a wide moat due to very high customer switching costs if they want to use a competitor’s GPU.

AI data centers also need hyper-fast and large-bandwidth networking to support the immensely powerful GPU processing. NVDA offers their full stack, DGX Cloud solution where they can run a portion of the customer’s data center to optimize AI workloads. This could position them to become an AI cloud computing leader, even potentially competing with hyperscalers like AWS or Azure.

In order to understand why NVDA is considered the leader in the AI space, we have to go back to what CEO Jensen Huang said in their Q1 report.

“The computer industry is going through two simultaneous transitions — accelerated computing and generative AI. A trillion dollars of installed global data center infrastructure will transition from general purpose (CPUs) to accelerated computing (GPUs) as companies race to apply generative AI into every product, service and business process.”

Their data center family of products, includes the H100, Grace CPU, Grace Hopper Superchip, NVLink, Quantum 400 InfiniBand and BlueField-3 DPU. These are all seeing very high demand from the hyperscalers and enterprise customers. Hyperscalers, themselves, represent about 50% of NVDA’s data center sales.

NVDA is not expecting major negative impact to their China sales due to pending export restrictions, although that risk is something to keep an eye on. According to FT, “China’s internet giants are rushing to acquire high-performance Nvidia chips vital for building generative artificial intelligence systems, making orders worth $5bn in a buying frenzy fuelled by fears the US will impose new export controls. Baidu, ByteDance, Tencent and Alibaba have made orders worth $1bn to acquire about 100,000 A800 processors from the US chipmaker to be delivered this year, according to multiple people familiar with the matter. The Chinese groups had also purchased a further $4bn worth of the graphics processing units to be delivered in 2024, two people close to Nvidia said. The A800 is a weakened version of Nvidia’s cutting-edge A100 GPU for data centres. Due to export restrictions imposed by Washington last year in a bid to choke Beijing’s technological ambitions, Chinese tech companies are only able to buy A800s, which have slower data transfer rates than A100s."

In this Q2 earnings report, they announced several marketplace collaborations with companies like Snowflake, ServiceNow, Accenture, VMware, Amazon AWS, Microsoft Azure, WPP, SoftBank, Hugging Face, Pixar, Adobe, Apple, AutoDesk, Xpeng and MediaTek.

Product: As expected, there were several product updates in the Q2 report.

Their new L40S GPU chip based servers are already shipping now.

The GH200 Super Chip is in full production and is expected to be available in Q1 2024. It contains 90% Nvidia GPUs and 10% ARM CPUs.

NVDA’s GH200 supercomputer for complex AI will be available in Q3 with a further next generation version launching in Q2 2024. It connects up to 256 GH200 chips to parallel process and deliver enhancing cost savings and performance.

They launched Nvidia Spectrum-X, an accelerated networking platform designed to improve the performance and efficiency of Ethernet-based AI clouds, which is shipping this quarter.

They unveiled Nvidia MGX, a server reference design available in Q3 that lets system makers quickly and cost-effectively build more than 100 server variations for AI, HPC and NVIDIA Omniverse applications.

They launched Nvidia AI Workbench, an easy-to-use toolkit allowing developers to quickly create, test and customize pre-trained generative AI models on a PC or workstation and then scale them, as well as Nvidia AI Enterprise 4.0, the latest enterprise version of the software.

NVDA is actively working on bringing generative AI to its gaming products via the development of its “Avatar Cloud Engine” (ACE).

They have begun shipping the GeForce RTX 4060 GPUs, bringing to gamers Nvidia’s Ada Lovelace architecture and DLSS.

They announced three new desktop RTX GPUs based on the Ada Lovelace architecture — NVIDIA RTX 5000, RTX 4500 and RTX 4000 — to deliver the latest AI, graphics and real-time rendering, which are shipping in Q3.

They also issued a major release of the Nvidia Omniverse platform, with new foundation applications and services for developers and industrial enterprises to optimize and enhance their 3D pipelines with OpenUSD and generative AI.

As a reference, I provided a simple primer on Nvidia’s products in this post.

Valuations: Taking into consideration enterprise value, gross profits and forward growth, the stock is NOT expensive right now. Their current PE is a rich 111 and their forward PE is a bit expensive at 58. Morningstar increased their fair market value by 60% to $480.

The question we have to ask ourselves is which valuation metric should we use for our own buying, selling and trimming plans.

Beachman score: The stock’s Beachman score …